The Online Safety Bill: does the duty of care go far enough?

The forthcoming Online Safety Bill has been back in the news headlines, after Prime Minister Boris Johnson committed to bringing the draft Bill back before Parliament before Christmas.

This is an important opportunity to raise awareness of elements we felt were missing from the draft Bill, as outlined in our response, which you can read in full here.

The draft Bill requires all companies to have a ‘duty of care’ for all children – a legal requirement to act to prevent younger users from harm. The largest sites (known as Category 1, and including Facebook and Twitter) will additionally be required to take action on harms affecting users over 18.

While this is – in theory – a positive step, the duty of care needs to be more responsive to existing harms, as well as new risks arising from emerging innovations and platforms.

New functionalities, new harms

New and emerging services can generate significant harms in a short space of time. For example, subscription-based video platform OnlyFans grew from 7.5 million users to 85 million users in less than a year. Subscribers pay content creators a monthly fee to view their pictures and videos. Creators can offer additional charged services such as private messaging or personalised content.

While not the only type of content available, the platform has become intrinsically associated with facilitating in-stream payments for sex work, as investigated by youth content platform VoiceBox in a report published earlier this year.

While the site does employ age verification mechanisms, young people are sidestepping these as both creators and subscribers. This not only puts them at risk of financial exploitation and exposure to adult content, but also of damage to their self-esteem, mental health and views on sex and relationships, as outlined in the report.

The Online Safety Bill categorises platforms by size – giving smaller platforms an opportunity to cause harm as they grow, until they hit a threshold for more stringent sanctions. Regulation needs to get “upstream” of these rapidly-developing platforms in order to mitigate harm to users, as well as be “horizon-scanning” for problematic emerging sites and functionalities.

Similarly, the current duty of care will not cover new and emerging platforms and functions that may be high-risk-by-design. A more overarching duty could bring them within scope, and reinforce the importance of safety by design: placing safety considerations at the very heart of platforms and services.

Functions mimicking gambling

As it stands, the Bill will not cover online gaming – something of great concern. As Parent Zone has identified in previous research, the industry shares worrying functionalities with the gambling industry.

For example, loot boxes are virtual 'treasure chests' containing undisclosed items, such as new weapons or ‘skins’ to customise characters. They can be highly desirable, demonstrating status even if not necessarily affecting a player’s progress through the game. They are designed to keep young players playing – and paying.

Other countries have included regulation on loot boxes – and the UK needs to do the same. Otherwise, it cannot conceivably achieve the Bill’s aim of being the safest place to go online for children.

Listen to Parent Zone's podcast, Tech Shock.

Duty to take action

The communications regulator, Ofcom, will oversee the defined ‘duty of care’ – but it remains unclear how it will review companies’ own risk assessments in order to do so.

For example, Facebook has come under fire in recent weeks due to a number of allegations - based on leaked documents from a whistleblower - about its approach to content and functionality across its platforms which could potentially be harmful to young people.

The duty of care must be extended to a duty to take action on both emerging and longstanding functionalities, and act in users’ interests as soon as they become aware they are at risk of harm.

Seizing the opportunity

The Bill has the opportunity to make a difference in reducing harm experienced online – but it will not do so if serious financial harm is left unregulated, and emerging platforms are able to slip through the net.

Find out more about the Online Safety Bill and what it means for families here.

Latest Articles

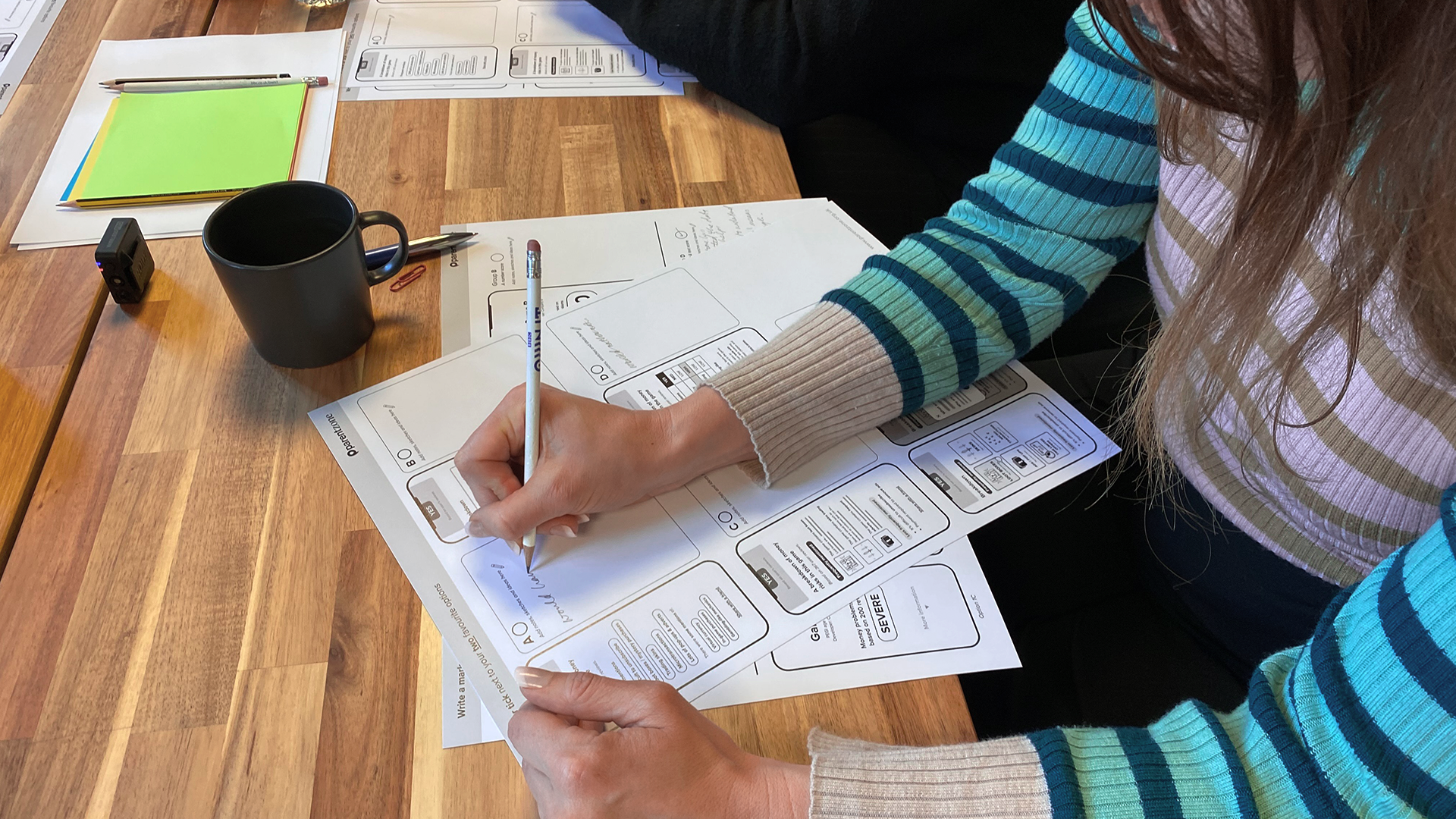

Designing a game rating tool that helps parents

Parent Zone is developing a new age-rating-style tool to transform confusing financial features into clear, usable information, helping parents make informed decisions about their children’s games.

"The Teacher and the Teenage Brain"

A guest blog by Dr John Coleman, professor at the University of Bedfordshire.

What is the age-appropriate design code – and how is it changing the internet?

We explain what the age-appropriate design code is, and what it means for families.