What parents need to know about TikTok

TikTok has become one of the most popular social media apps on the planet, currently boasting 1.7 billion monthly active users.

Young people in particular love its short-form video focus, and it is now one of Generation Z’s favourite tools of expression.

Parent Zone and TikTok have worked together to create a series about safety for teens when using the platform. Watch this video below for a quick introduction to parenting a young person on TikTok:

So what is TikTok, and are there any risks to be aware of? Here's everything you need to know.

![]()

What is TikTok?

TikTok was born out of a merger between two already popular apps, Douyin and Musical.ly. It’s based around many of the same features found on those platforms and is primarily a social media app where users can both create and watch short video snippets, often accompanied by music.

Since its launch, the app has consistently stayed at the top of both the Google Play Store and Apple App Store charts.

Users don’t need an account to watch videos on TikTok but if they want to like, comment, customise their feed or create their own video content, they’ll be prompted to sign up for a free account.

Why is it so popular?

You can find videos relating to almost all interests on TikTok, from DIY tricks and make-up tutorials to gaming and sports. People are allowed to let their imagination run wild on TikTok, as there isn’t really a ‘right’ or ‘wrong’ type of content. You might use TikTok to pick up new skills, learn how to play an instrument or even connect with people you share interests with.

The videos – which, as of February 2022, can be up to 10 minutes in length – are often playful and take maximum advantage of the editing tools to make them as memorable as possible.

Although most of the content you will find is upbeat, funny and joyful, people also use the platform to respond to political events and movements – such as the #BlackLivesMatter campaign – and the COVID-19 pandemic.

In contrast to most of its competitors, TikTok doesn’t require the user to add any information to their profile: they’re issued with a user number, but whether they add a name, profile picture or any other personal information is their choice.

Users are given complete creative control of their content. Putting together a video is very easy and there’s a range of tools available to spruce up the content, such as filters, effects, text and stickers.

What do parents need to be aware of?

Age restrictions

TikTok requires its users to be at least 13 years old. Despite users having to be aged 13 and up the age ratings for TikTok are, a little confusingly, 12+ on Apple's App Store and "Parental Guidance Recommended" on the Google Play Store.

When logging in for the first time, the user will be asked to log in using either their email, their Google account, or by linking TikTok to one of their other social media accounts, for instance Facebook or Twitter.

In January 2021, TikTok updated its privacy settings so that accounts for under-16s are set to private by default. This means that other users must be approved before they can see and interact with your child’s content or contact them.

Note also that standard TikTok accounts (rather than TikTok for Younger Users profiles) will be taken down if moderators suspect that the individual operating the account is under the age of 13. This can be a useful piece of information if you’re trying to resist the ‘pester power’ of younger children – there’s less of a point getting around age restrictions if the account will be taken down anyway.

You can read more about their age-appropriate policy here.

Screen time

As of March 2023 TikTok has introduced a daily screen time cap of 60 minutes which applies to all accounts belonging to users under the age of 18 by default. Although teens are able to turn off the default limit themselves (or of course lie about their actual age when signing up) they will be prompted to set a daily limit every time they spend more than 100 minutes a day using TikTok.

Teens will also be send an inbox notification once per week with a recap of just how much time they've spent on the app, and users are able to set 'break reminders' – notifications which remind you to take a breather from TikTok after periods of uninterrupted use.

The app now also includes a 'screen time dashboard', something designed to let individuals know what their time spent on TikTok looks like. Users can again get a summary of just how much they use the app, and also are given insight into how often they open TikTok, how their usage this week compares to last week, and what time of day (or night) they use the app most.

Parental controls and safety

TikTok offers its users a range of settings to customise their experience and make it safer for young people, which they refer to as ‘Family Pairing’. These settings allow parents to control elements of their child’s TikTok account if their child is under 16.

The features include the option to decide what you child can search on the app, set screen time limits, opt to disable comments on your child’s videos and decide if other users can view their ‘liked’ videos. As a parent, you won’t have access to your child’s actual videos. It's also worth noting that children under the age of 16 are not able to engage in direct messaging.

These controls have recently been tightened up as part of a concerted effort by the platform to make it safer for its younger users. Although it’s important to bear in mind that parental controls don’t eliminate risk, they can be a good first step. In conjunction with their ‘Family Pairing’ settings, TikTok has also included tips from teenagers. This is designed to encourage dialogue between parents and children around online safety and privacy.

You can view TikTok’s Guardian’s Guide for more information on parental controls – including setting up passwords and linking yours and your child’s phone numbers.

Blocking and reporting functions

TikTok is moderated and content that does not uphold its community guidelines is continuously weeded out.

If you want to further minimise the risk of children stumbling across mature content, it’s a good idea to enable ‘Restricted Mode’. TikTok doesn’t explicitly say how this works, merely that it “limits the appearance of content that may not be appropriate for all audiences”.

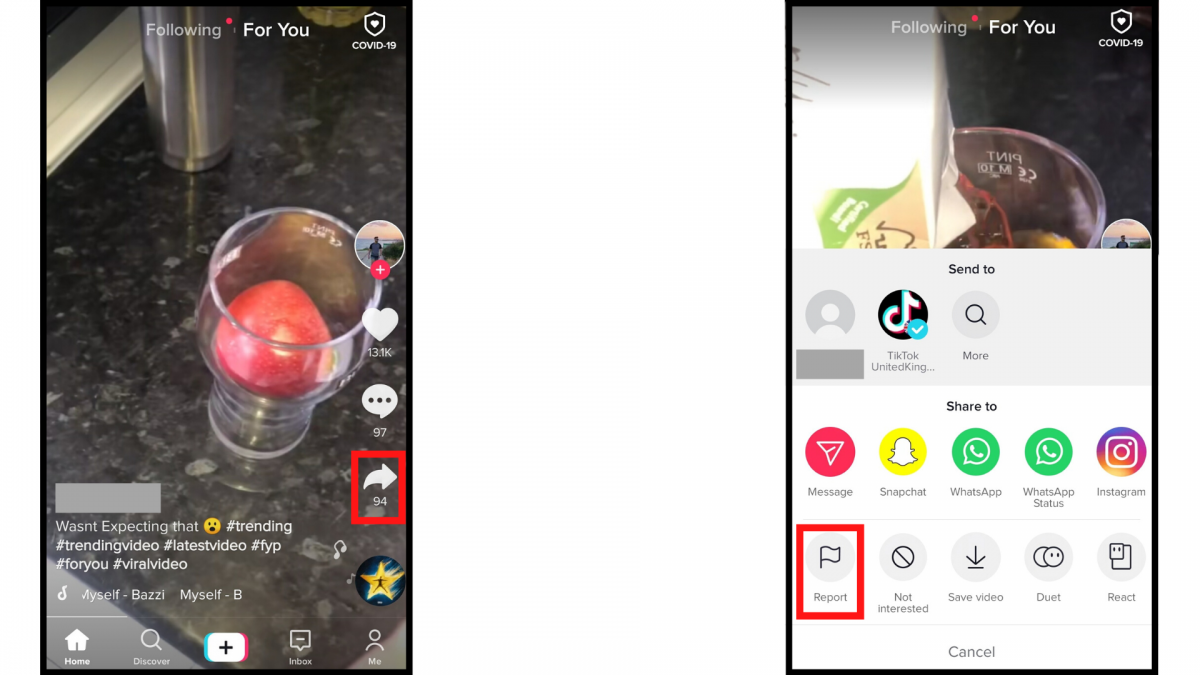

Make sure that your child knows how to report videos or users if they stumble across inappropriate content and how to block users who are bothering them.

You can block a user by going to their profile and clicking either ‘Report’ or ‘Block’ from the drop-down menu. You will also be asked to give a description of your complaint and complete a few more steps. You can report a video by clicking on the arrow-shaped ‘Share’ button and select the ‘Report’ icon from the menu. You can then give a brief description of how the video was inappropriate and follow the steps.

In-app messages and privacy

Although connecting with new people on social media is not harmful in and of itself, it is important to be aware of the possible risks.

The platform’s guidelines include a section devoted to ‘Minor safety’, which states “We are deeply committed to child safety and have zero tolerance for predatory or grooming behaviour toward minors.” As mentioned, to further address concerns, TikTok introduced a feature that prevents under-16s from both sending and receiving private messages – but nothing stops young users from faking their age.

Children aged 16-17 now have their direct messages set to ‘no one’ by default. This means that they will have to manually switch settings if they wish to send and receive private messages.

Overall, there’s plenty of choice when it comes to privacy settings – you’re able to control who can see, share, or download your child’s content, even on a video-by-video basis. Visit TikTok’s 'Youth Portal' for more information on how best to do this, and be sure to let children know that they can come to you if they’ve had a bad experience which has involved being contacted by a stranger.

Youth resources and mental health

TikTok's Youth Portal gives young users expanded safety advice on privacy, community guidelines, reporting functions and resources for dealing with dangerous or upsetting situations. All this information is presented in a youth friendly, accessible way.

The Youth Portal also has an expanded list of resources, including tips for wellbeing and links to mental health support sites.

Alongside this, TikTok have enhanced their search intervention policy. If your child searches for something that sounds worrying – for example, #suicide – they will be directed to Samaritans or another mental health support site. If they search for something that might be less explicitly distressing – for example #scary makeup – the search will come up with an ‘opt in’ viewing feature.

Data collection

TikTok has previously come under fire for illegally collecting the data of children under 13, which resulted in a record-breaking fine from the US Federal Trade Commission (FTC) of £4.2m and harsh criticism from the UK’s Information Commissioner's Office (ICO).

Fortunately, TikTok doesn’t require users to give much personal information to join the app anymore, but it’s a good idea to minimise the amount of data your child stores on the app and turn off personalised ads in the settings.

Listen to Parent Zone's podcast, Tech Shock.

Challenges

The social media platform is famous for spawning viral challenges which are a big draw for many users. But TikTok has received a lot of flak for allowing potentially dangerous challenges – such as the Skullbreaker Challenge and the Outlet Challenge – to reach popularity on its platform. Although most challenges are fairly harmless, make sure that your child knows not to try risky activities they see on TikTok.

Content

Again, there's an enormous amount of really varied content on TikTok. By looking for (and positively engaging with) uplifting, funny, or educational posts and content creators, children will not only get the most out of their time online, but will also, thanks to how social media algorithms operate, see more content of a similar, positive nature on their feeds.

When it comes to uploading content, TikTok has introduced a new pop-up feature when a user under the age of 16 posts a video, asking them to review who can watch the video. They are required to make a selection before they can publish.

A recent update also prevents anyone from downloading content produced by users under the age of 16.

Spot something that doesn't look quite right? You can email librarian@parentzone.org.uk to submit comments and feedback.

This article was last updated on 08/03/23.

Previous Article

Previous Article