Trusting chatbots: how useful is AI for understanding financial risks in games?

Many parents are turning to AI chatbots for advice and support – but is this information actually any good? We teamed up with VoiceBox to find out how parents can get the best from bots.

There are millions of online games on the market for children – with an estimated 40 million games on Roblox alone. It therefore isn't remotely realistic for parents to keep up with the ecosystem of financial risks associated with games – including scams, skin gambling and pressure to spend.

With one in three parents now using AI chatbots, we wanted to understand whether bots can produce good enough quality information to be part of the solution.

We commissioned youth platform VoiceBox to test five AI chatbots – and learn how parents can get the best results.

Which chatbot?

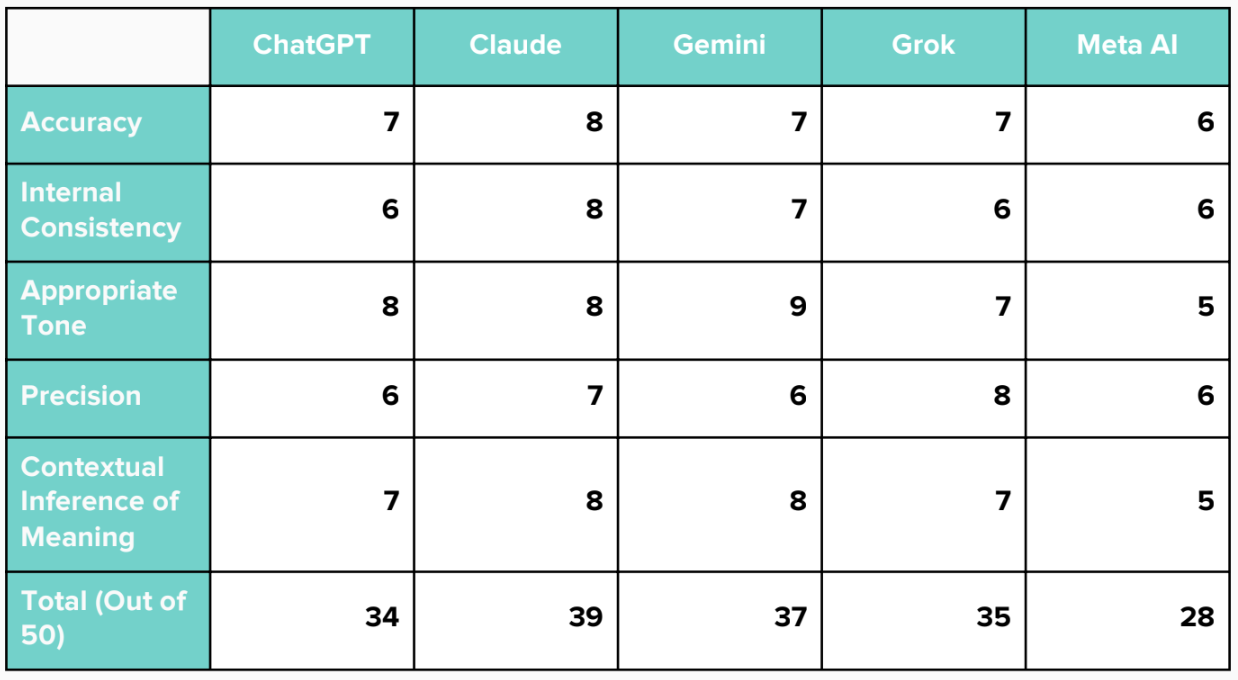

The research focused on free versions of the five most popular AI chatbots: ChatGPT, Grok, Meta AI, Gemini, and Claude.

It found that Claude came top for most consistently useful answers, based on scoring across a list of criteria. Note that results were based on free-to-use versions, with no prior chat history. The most effective chatbot for you may be the one you use most regularly.

The better the prompt, the better the results

Specific, well-structured questions produce clearer and more useful information. By contrast, vague questions result in incomplete or misleading answers. Here are four tips for better chatbot prompts.

Keep it neutral. Avoid emotional phrasing. Focus on facts, such as what spending options exist, how they work, and how risky they might be. Neutral phrasing helps bots provide clearer information.

Include your child’s age. AI responses are more relevant when you provide context.

Ask about specific design tactics. Request information on features that encourage spending, like loot boxes, streak rewards, time-limited offers, or peer pressure. This improves the detail and usefulness of bot responses.

Identify third-party risks. Some risks come from outside the main game, such as unofficial marketplaces. Ask bots to list these separately and verify the information.

Be specific

The research found that using refined, specific wording gave better results. For example, you should ask about particular spending risks or tactics, rather than asking, “Is [Game X] safe?” A good, specific prompt example would be: “Which in-game purchases could encourage my child to spend too much?”

We have created three prompts that you can copy and paste for better results.

1. Microtransactions and unhealthy spending

Copy and paste the text below.

Create nothing but a table including all of the microtransactions and in-game purchases for the game [Game] on [Platform]. Include a score from 1 to 10 on whether the game is seen to be pay-to-win. Include a score from 1 to 10 on how likely the game is to encourage unhealthy spending. Include a suggested age rating based on potential financial risk. Respond with only the table and scores, the least and most expensive available microtransactions in the game, followed by a short summary of the data.

2. Spending pressures and design tactics

Copy and paste the text below.

List the specific design tactics, such as time-limited offers, streak rewards, or peer pressure incentives, used in [Game] on [Platform] to encourage frequent purchases.

3. Parental controls, blocking and monitoring

Copy and paste the text below.

Does [Game] have parental controls that can block or monitor my child’s in-game spending on [Platform]?

Spotting mistakes

The research also found that results weren't perfect – and that all the bots could confuse or omit important information. Here are three red flags to watch for.

Right game, wrong device. Chatbots can mix up different versions of the same game. For example, a harm in a mobile version may not exist in the console version.

Third-party confusion. Chatbots can mistake third-party platforms or marketplaces for part of a game. It can, however, be useful to understand the wider ecosystem around a game, especially where financial harms are concerned.

Tone. Chatbots can give confident-sounding answers that are actually incorrect (these are also known as “hallucinations”). Grok and Meta AI also took an informal tone that can be off-putting.